Table of contents

- Disclaimer

- Introduction

- LMS and LFS comparative datasets

- Weighting of the comparative datasets

- Method of statistical testing for comparative datasets

- Employment, unemployment and economic inactivity for people aged 16 years and over and aged from 16 to 64 years (not seasonally adjusted)

- Educational status and labour market status for people aged from 16 to 24 years (not seasonally adjusted)

- Full-time and part-time workers (not seasonally adjusted)

- Actual weekly hours worked (not seasonally adjusted)

- Conclusions

- Appendix A - Cohorts included in the LMS analysis data sub-set

- Appendix B - Additional downloads

1. Disclaimer

These Research Outputs are not official statistics relating to the labour market. Rather, they are published as outputs from research into an alternative prototype survey instrument (the Labour Market Survey (LMS)) to that currently used in the production of labour market statistics (the Labour Force Survey (LFS)).

It is important that the information and research presented here is read alongside the accompanying technical report to aid interpretation and to avoid misunderstanding. These Research Outputs must not be reproduced without this disclaimer and warning note.

Back to table of contents2. Introduction

Between October 2018 and April 2019, the Office for National Statistics (ONS) conducted a large-scale, mixed-mode test of the Labour Market Survey (LMS). This test formed an important part of an ongoing research programme, which is being conducted as part of the ONS Census and Data Collection Transformation Programme. For a comprehensive summary of the context and design for this test, please see the accompanying LMS statistical test technical report.

The specific purpose of this report is to replicate several core statistical estimates currently produced from the Labour Force Survey (LFS) and compare them with those produced from a comparative LFS dataset. The analyses presented within do not aim to suggest that the experimental estimates produced from the LMS are better or more accurate that those produced from the LFS. Rather, the objective of this test is to help inform the evidence base for the transformation of labour market data, and to identify what further research and testing is required. Where significant differences have been identified, some of the potential drivers of these differences are briefly discussed and avenues for further analysis and consideration are suggested.

To enable a direct comparison with the LMS Test dataset, several important adjustments were made to LFS data collected for the equivalent quarter (November 2018 to January 2019) (see Section 3, LMS and LFS comparative datasets). The estimates produced from this LFS dataset – shown in the following tables and Appendix B – are only for the purpose of comparison with the LMS and are not comparable with published LFS estimates.

The comparisons in this report focus on five of the seven1 main labour market-derived variables produced from LMS that are also used for the labour market monthly statistical bulletin:

ILODEFR and INECAC– Economic activity

CURED – Current education received

SECJMBR – Whether has a second job / status in second job

SUMHRS – Total actual hours worked in main and second job(s)

The equivalent published LFS tables for which estimates are replicated are provided in the following list2: these are not the estimates to which LMS data are being compared. These links are provided for information only.

EMP01 NSA: Full-time, part-time and temporary workers (not seasonally adjusted)

HOUR01 NSA: Actual weekly hours worked (not seasonally adjusted)

Notes for: Introduction

- Only five of the seven main labour market variables are drawn upon because the other two do not have large enough sample sizes to provide insights backed by sufficient statistical power.

- Fully replicated and populated versions of these tables are available in Appendix B.

3. LMS and LFS comparative datasets

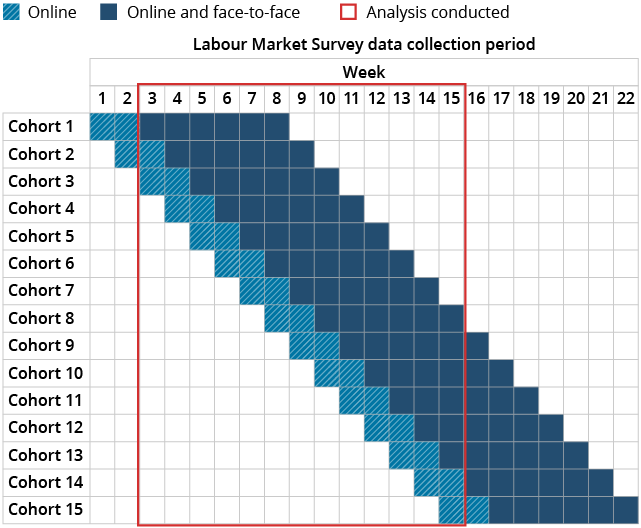

A comprehensive discussion of the sample and operational design used for the Labour Market Survey (LMS) Statistical Test is provided in the LMS statistical test technical report. The sample was split over 15 cohorts and was issued weekly.

The collection period for each cohort was eight weeks, which means the full LMS dataset (in terms of the reference weeks) spanned the period of 22 October 2018 to 25 March 2019. The reporting period for LFS outputs is 13 weeks – a quarter of a year – meaning a sub-set of the full LMS achieved sample was required to produce statistical comparisons. The LMS analysis dataset therefore includes data collected between weeks 3 and 15 (inclusive) of the total test period.

These 13 weeks were chosen because the achieved sample in the first two weeks of data collection was too low and it was exclusively an online sample. By week 3, the achieved sample was higher and contained both online and face-to-face cases. This LMS comparative dataset contained 13,335 responding individuals and was equivalent to a quarter of data collection between November 2018 and January 2019 (see Appendix A).

To produce a dataset as similar as possible to the LMS Statistical Test design, a subset of Labour Force Survey (LFS) data from the corresponding quarter of data collection was produced. This differed from the LFS dataset used to produce published estimates in the following important ways:

the LFS comparative dataset included Wave 1 responses only; it excluded all responses achieved for the longitudinal component of the full quarterly LFS dataset (Waves 2 to 5)

the comparative dataset excluded all responses achieved in Northern Ireland and north of the Caledonian Canal, to correspond with the sample area used for the LMS Statistical Test

the resulting dataset was reweighted to account for the exclusion of these cases

4. Weighting of the comparative datasets

The weighting process for the Labour Market Survey (LMS) Test is based upon the weighting principles for the Labour Force Survey (LFS), but has been expanded to account for some of the differences in collection designs between the LMS and the LFS. As with the LFS, the LMS weighting process assigns a calibration weight to all responding individuals with a derived economic activity status but does not assign a weight to non-responding individuals.

The first phase of LMS weighting closely replicated that of the LFS weights (see Labour Force Survey User Guide Volume 1, Section 10 – Weighting the LFS Sample Using Population Estimates (PDF, 1.44MB)). Each responding individual was included in three separate calibration groups: region, sex, and age group. Within each calibration group the weights summed to population totals. The LFS weighting process uses local authority (LA) districts as the geographical partition, whereas the LMS uses region – this was because of sample sizes by LA being too small but there was a negligible impact upon the weighting process.

Following the replicated LFS calibration method, an additional set of calibration controls were implemented. The weights were adjusted to account for potential bias stemming from the uneven distribution of the achieved sample over the selected weeks of the quarter. This was done by calculating the number of responding individuals in each rolling reference week (both online and face-to-face) and adjusting the weight to make this variation more uniform over the 13-week period. Following this, an additional control was added to account for the variations in the proportions of online and face-to-face responders each week, again making these more uniform across each week of the selected period.

To produce an accurate comparison of LMS and LFS estimates, the effect of the longitudinal element (Waves 2 to 5) of the November 2018 to January 2019 LFS dataset needed to be excluded. The longitudinal cases were excluded, along with households in Northern Ireland/north of the Caledonian Canal as the LMS sample did not include such households, and the Wave 1 only cases were re-weighted using the same calibration controls with respect to age, sex and region.

Back to table of contents5. Method of statistical testing for comparative datasets

A selection of core labour market estimates were derived using the Labour Market Survey (LMS) dataset – these derivations, and the variables used in their production, are documented in Appendix B. The methods of derivation used for the Labour Force Survey (LFS) dataset matched those used as part of standard LFS data processing. These are described in the LFS User Guide Volume 4 – LFS standard derived variables 2019 (PDF, 10.36MB).

For each survey estimate that was compared, the sampling error was computed using the Linearised Jackknife method. As the LFS and LMS samples were selected independently, the standard error of the difference between respective estimates was computed by taking the square root of the sum of the respective variances. When performing statistical significance tests, the Bonferroni correction was used to correct for multiple testing.

As part of this research exercise additional tables were replicated based on the Office for National Statistics (ONS) [labour market statistical bulletin3. These have not been included in this report]. In most cases this is because although the LMS Statistical Test had a large achieved sample size overall, some cohort sizes (when broken down by age, sex, educational status and so on) were too small to allow for detection of differences. Even in the tables considered in this report, there are large sampling errors for some estimates because of low cohort sizes. Future testing with larger sample sizes will improve accuracy of detecting differences. Despite the difference in achieved sample sizes, the characteristics of each achieved sample were broadly similar (further details can be found in the LMS statistical test characteristics report).

The calculated confidence intervals for each table are based on the standard error of the differences. The standard error of the differences, and differences in estimates, were used to determine the lower and upper bounds. This method means that, for each estimate, statistical significance should be understood and read as follows:

| 95% Confidence interval lower bound (-/+) | 95% Confidence interval upper bound (-/+) | Significance |

|---|---|---|

| - | + | Not significant |

| - | - | Significant |

| + | + | Significant |

Download this table Table 1: How reported confidence intervals relate to statistical significance

.xls .csvIf both bounds are either negative or positive (that is, the interval between the two bounds does not cross zero), then a statistically significant difference has been detected at the 95% confidence interval.

Though only 95% confidence interval bounds are displayed, significance tests were carried out to the 99% confidence level. All statistically significant differences discussed in the commentary of this report are significant at the 95% confidence level or greater. Where the difference in estimates between the LMS and LFS comparative dataset were statistically significant, this is noted in the tables in the following way:

| * | Observed difference in statistical estimates is significant to the 95% confidence level. |

| ** | Observed difference in statistical estimates is significant to the 99% confidence level. |

Download this table Table 2: How statistical significance is reported in tables

.xls .csvWhere there are statistically significant differences observed, potential drivers or reasons for these differences are considered. In each case it is not possible to fully explain at this stage the underlying causes because of the notable differences in survey design. However, some initial thoughts are provided alongside recommendations for further research.

It is important to note that this test is a single point of comparison between the LMS and LFS, and therefore the absence of statistically significant differences cannot be taken to mean that significant differences would not be observed in future tests.

Back to table of contents6. Employment, unemployment and economic inactivity for people aged 16 years and over and aged from 16 to 64 years (not seasonally adjusted)

Main findings:

- No statistically significant differences were found between the Labour Market Survey (LMS) test and the comparative Labour Force Survey (LFS) dataset for any of the main headline labour market estimates.

Table 3 shows employment rates for people aged 16 to 64 years and unemployment rates for people aged 16 years and over. The Labour Market Survey (LMS) employment rate was estimated at 75.0%, 0.7 percentage points less than the estimate produced from the Labour Force Survey (LFS) comparative dataset; this observed difference was not significant.

No significant differences in these headline figures between the two datasets were observed when they were broken down by sex. The LMS employment rate for men (78.8%) was 1.4 percentage points lower than the LFS comparison, while the LMS employment rate for women (71.3%) was 0.1 percentage points higher than the estimate from the LFS comparative dataset.

The LMS unemployment rate was estimated at 3.3%, 0.1 percentage points lower than the equivalent LFS estimate. Differences were found for men and women; the LMS unemployment rate for men was estimated at 3.7%, 0.4 percentage points higher, while the LMS unemployment rate for women was estimated at 2.8%, 0.8 percentage points lower than that reported from the LFS comparison dataset. Therefore, while the unemployment rate shows minimal change overall, and no statistically significant differences were found, there are some slight variations by sex in the overall rate that require further analysis to understand. Further testing with larger sample sizes (and therefore a reduction in sampling variability) is required to help determine whether these are true differences.

| Statistical estimates, % (November 2018 to January 2019) | Statistical difference | |||||

|---|---|---|---|---|---|---|

| Sex | Measure | LMS dataset | LFS comparative dataset | Difference in estimates (%)¹ | 95% Confidence interval lower bound | 95% Confidence interval upper bound |

| All | Employment rate (aged 16 to 64) | 75.0 | 75.7 | -0.7 | -2.1 | 0.8 |

| Unemployment rate (aged 16 and over) | 3.3 | 3.4 | -0.1 | -0.8 | 0.5 | |

| Men | Employment rate (aged 16 to 64) | 78.8 | 80.3 | -1.4 | -3.4 | 0.6 |

| Unemployment rate (aged 16 and over) | 3.7 | 3.2 | 0.4 | -0.6 | 1.5 | |

| Women | Employment rate (aged 16 to 64) | 71.3 | 71.2 | 0.1 | -2.3 | 2.4 |

| Unemployment rate (aged 16 and over) | 2.8 | 3.6 | -0.8 | -1.8 | 0.2 | |

Download this table Table 3: Employment and unemployment for people aged 16 years and over and aged from 16 to 64 years (not seasonally adjusted)

.xls .csvTable 4 shows LMS estimates for the rates of economic inactivity. For each indicator the LMS estimate was higher than the equivalent LFS comparator although none of the differences were statistically significant. The LMS estimates show an economic inactivity rate of 22.3% for those aged 16 to 64 years, 0.8 percentage points higher than the estimate from the LFS comparative dataset. The economic inactivity rate for those aged 16 years and over was 37.0%, 0.3 percentage points higher than the equivalent LFS estimate. Across estimates from both datasets, the economic inactivity rate for those aged 16 years and over was higher because of respondents who identified as retired.

Looking at economic inactivity by sex, estimates from the LMS and LFS comparative datasets were found to be broadly comparable. The LMS estimates show an economic inactivity rate for men aged 16 to 64 years of 18.0%, 1.0 percentage point higher than the equivalent LFS estimate, while the economic inactivity rate for women aged 16 to 64 years was 26.6% on the LMS, 0.5 percentage points higher.

| Statistical estimates, % (November 2018 to January 2019) | Statistical difference | |||||

|---|---|---|---|---|---|---|

| Sex | Measure (economic inactivity rate) | LMS dataset | LFS comparative dataset | Difference in estimates (%)¹ | 95% Confidence interval lower bound | 95% Confidence interval upper bound |

| All | 16 to 64 | 22.3 | 21.6 | 0.8 | -0.6 | 2.1 |

| 16 and over | 37.0 | 36.7 | 0.3 | -0.9 | 1.4 | |

| Men | 16 to 64 | 18.0 | 17.0 | 1.0 | -0.8 | 2.9 |

| 16 and over | 32.0 | 31.6 | 0.4 | -1.2 | 2.0 | |

| Women | 16 to 64 | 26.6 | 26.1 | 0.5 | -1.8 | 2.8 |

| 16 and over | 41.7 | 41.6 | 0.1 | -1.7 | 1.9 | |

Download this table Table 4: Economic inactivity for people aged 16 years and over and aged from 16 to 64 years (not seasonally adjusted)

.xls .csv7. Educational status and labour market status for people aged from 16 to 24 years (not seasonally adjusted)

Main findings:

Significant differences were observed in the estimates of people aged 16- to 24 years in full- time education.

Significantly more men and women in this age cohort were estimated to be in full-time education and also in employment based on findings from the Labour Market Survey (LMS) test.

These differences need further investigation in the context of the changes made to the design of the prototype LMS; specifically, the questionnaire design will be reviewed and the approach to sampling will be investigated further.

Table 5 shows estimates for the labour market status of people aged 16 to 24 years by education status. These estimates show 3,419,000 in full-time education, 494,000 (16.8%) more than those produced from the comparative Labour Force Survey (LFS) dataset. Conversely the estimates show 3,318,000 not in full-time education, 494,000 fewer than the comparative LFS estimate. Both differences in estimates were found to be statistically significant.

Within these differences in the totals, Labour Market Survey (LMS) estimates show 1,136,000 workers in full-time education, 365,000 (47.5%) more than the corresponding figure from the LFS comparative dataset. This difference was again statistically significant. Therefore, the observed difference in education status appears to be primarily driven by those in employment, with more employed people aged 16 to 24 years estimated to be in full-time education based on the prototype LMS survey. However, statistically significant differences were not found for those in full-time education and other economic activity statuses (those who were unemployed or economically inactive). The LMS estimates of 372,000 fewer people not in full-time education but employed, and the estimate of 125,000 fewer people not in full-time education but unemployed, were found to be statistically significant.

| Statistical estimates, thousands (November 2018 to January 2019) | Statistical difference | |||||

|---|---|---|---|---|---|---|

| All (aged 16 to 24) | Measure | LMS dataset | LFS comparative dataset | Difference in estimates¹ | 95% Confidence interval lower bound | 95% Confidence interval upper bound |

| Full-time education | Total | 3,419 | 2,925 | 494** | 148 | 840 |

| Employed | 1,136 | 770 | 365** | 124 | 607 | |

| Unemployed | 121 | 62 | 59 | -13 | 130 | |

| Economically inactive | 2,162 | 2,092 | 70 | -232 | 372 | |

| Not full-time education | Total | 3,318 | 3,812 | -494** | -840 | -148 |

| Employed | 2,522 | 2,893 | -372* | -687 | -56 | |

| Unemployed | 188 | 313 | -125* | -233 | -18 | |

| Economically inactive | 608 | 605 | 3 | -178 | 184 | |

Download this table Table 5: Educational status and labour market status for people aged from 16 to 24 years (not seasonally adjusted)

.xls .csvThe comparative figures shown in Table 5 are broken down to show how they relate to men and women in Table 6 and Table 7 respectively. Table 6 shows the LMS dataset produced an estimate of 1,721,000 men in full-time education, 289,000 (14.4%) more than reported for the LFS comparative dataset, a statistically significant difference. Moreover, 475,000 male workers in full-time education were estimated for LMS, 179,000 (58.8%) more than the comparative LFS figure, which was also a statistically significant difference. Finally, twice as many men were found to be unemployed and in full-time education, although this difference was not statistically significant.

| Statistical estimates, thousands (November 2018 to January 2019) | Statistical difference | |||||

|---|---|---|---|---|---|---|

| Men (aged 16 to 24) | Measure | LMS dataset | LFS comparative dataset | Difference in estimates¹ | 95% Confidence interval lower bound | 95% Confidence interval upper bound |

| Full-time education | Total | 1,721 | 1,432 | 289* | 43 | 535 |

| Employed | 475 | 299 | 179* | 8 | 343 | |

| Unemployed | 60 | 32 | 28 | -26 | 81 | |

| Economically inactive | 1,185 | 1,100 | 86 | -147 | 318 | |

| Not full-time education | Total | 1,720 | 2,009 | -289* | -535 | -43 |

| Employed | 1,336 | 1,530 | -194 | -432 | 44 | |

| Unemployed | 106 | 193 | -87 | -181 | 7 | |

| Economically inactive | 278 | 286 | -8 | -136 | 120 | |

Download this table Table 6: Educational status and labour market status for men aged from 16 to 24 years (not seasonally adjusted)

.xls .csvTable 7 shows an LMS estimate of 1,698,000 women on full-time education courses, 205,000 (13.7%) more than the LFS comparative dataset. Unlike the corresponding estimates for men (Table 6), this difference was not found to be statistically significant.

The LMS estimated 660,000 female workers in full-time education, 190,000 more than the LFS comparative dataset estimate; this difference was statistically significant. Similar to the results found for men, there were almost twice as many unemployed women in full-time education estimated for LMS, but this was not found to be statistically significant. The very small number of responses in the achieved sample (equating to fewer than 100,000 people in the weighted counts for both LMS and the LFS comparison dataset) means sampling variability cannot be discounted as a reason for these differences. A larger sample size in another test using the same survey design could potentially show these differences to be significant.

| Statistical estimates, thousands (November 2018 to January 2019) | Statistical difference | |||||

|---|---|---|---|---|---|---|

| Women (aged 16 to 24) | Measure | LMS dataset | LFS comparative dataset | Difference in estimates¹ | 95% Confidence interval lower bound | 95% Confidence interval upper bound |

| Full-time education | Total | 1,698 | 1,493 | 205 | -34 | 444 |

| Employed | 660 | 471 | 190* | 0² | 379 | |

| Unemployed | 61 | 30 | 31 | -22 | 83 | |

| Economically inactive | 977 | 993 | -15 | -224 | 193 | |

| Not full-time education | Total | 1,598 | 1,803 | -205 | -444 | 34 |

| Employed | 1,186 | 1,363 | -178 | -399 | 44 | |

| Unemployed | 82 | 120 | -38 | -113 | 37 | |

| Economically inactive | 330 | 319 | 11 | -135 | 156 | |

Download this table Table 7: Educational status and labour market status for women aged from 16 to 24 (not seasonally adjusted)

.xls .csvThe high reported differences, some of which had a high level of statistical significance despite relatively low unweighted cohort sizes, require further analysis. There are several potential explanations for the significantly higher number of people estimated to be in full-time education by the LMS Statistical Test. Given that the biggest driver of the reported increased numbers in full-time education on the LMS was amongst those in employment, more understanding is required of how certain education courses are being classified in comparison with the LFS.

There are several potential factors that may be driving this difference, all of which require further consideration. The use of AddressBase for the sampling frame for LMS, compared with the Postcode Address File (PAF) used for LFS, may have led to differences in the types of addresses sampled. Further details on these sampling frames can be found in the LMS statistical test technical report. Operational effects including the relative experience of the subject matter and different working patterns of the respective field forces could also have accounted for some of the difference in the estimates. Differences in the questionnaire design between LMS and LFS, not just in question wording but question ordering, may be another factor. These possibilities, plus any potential mode effects, the effect of proxy responses and possible differences in field practices will be subject to further consideration and analysis.

Back to table of contents8. Full-time and part-time workers (not seasonally adjusted)

Main findings:

No significant differences were observed between estimates for the number of employees, both full-time and part-time, produced from both comparative datasets.

There were significantly fewer people who were classified as self-employed in the estimates produced by the Labour Market Survey (LMS) compared with those from the Labour Force Survey (LFS); this difference in self-employment was also found to be significant for women.

Tables 8 and 9 present estimates for the numbers of full-time and part-time workers. For both the Labour Market Survey (LMS) Test and the Labour Force Survey (LFS), respondents self-classified their working status as either full-time or part-time, although there were differences in the question variables and guidance respondents were shown (see Appendix B).

Table 8 presents data on those respondents who self-reported themselves as “employees” rather than “self-employed”. The LMS estimates in Table 8 report a slightly higher number of employees in the labour market; 593,000 more than the LFS comparative dataset (or 2.2% more in relative terms). This higher overall figure reported on LMS holds for both full-time and part-time employees.

However, when these estimates are considered by sex, there were fewer full-time (223,000) and more part-time (218,000) male employees observed for the LMS test in comparison with the LFS comparative dataset. In contrast, the opposite is true for females; there were 391,000 more full-time employees, and 96,000 fewer part-time employees estimated for LMS. However, none of the observed differences between the LMS and the LFS shown in Table 8 were statistically significant.

| Statistical estimates, thousands (November 2018 to January 2019) | Statistical difference | |||||

|---|---|---|---|---|---|---|

| Sex | Measure¹ | LMS dataset | LFS comparative dataset | Difference in estimates² | 95% Confidence interval lower bound | 95% Confidence interval upper bound |

| All | Total | 26,981 | 26,388 | 593 | -74 | 1,260 |

| Full-time | 19,697 | 19,529 | 168 | -564 | 900 | |

| Part-time | 6,962 | 6,840 | 122 | -399 | 642 | |

| Men | Total | 13,483 | 13,345 | 138 | -359 | 635 |

| Full-time | 11,670 | 11,893 | -223 | -770 | 324 | |

| Part-time | 1,654 | 1,437 | 218 | -96 | 531 | |

| Women | Total | 13,498 | 13,043 | 455 | -45 | 955 |

| Full-time | 8,027 | 7,636 | 391 | -133 | 914 | |

| Part-time | 5,308 | 5,404 | -96 | -581 | 389 | |

Download this table Table 8: Full-time and part-time employees (not seasonally adjusted)

.xls .csvTable 9 provides comparative estimates between levels of full-time and part-time workers who reported themselves to be self-employed. Unlike the reported estimates for employed workers, statistically significant differences were observed between the estimates from the LMS and the LFS comparative dataset. As Table 9 shows, the LMS estimates for the total number of self-employed workers was 569,000 (11.5%) lower than the estimate from the LFS comparative dataset.

Additionally, a statistically significant difference was observed for the total number of women in self-employment, with 301,000 (18.1%) fewer classified as self-employed in LMS estimates compared with those produced from the LFS comparative dataset. Though all categories of self-employment shown in Table 9 were estimated to be lower on the LMS for both men and women, there were no other statistically significant differences observed.

| Statistical estimates, thousands (November 2018 to January 2019) | Statistical difference | |||||

|---|---|---|---|---|---|---|

| Sex | Measure¹ | LMS dataset | LFS comparative dataset | Difference in estimates² | 95% Confidence interval lower bound | 95% Confidence interval upper bound |

| All | Total | 4,371 | 4,939 | -569** | -994 | -144 |

| Full-time | 3,091 | 3,451 | -360 | -765 | 45 | |

| Part-time | 1,275 | 1,474 | -199 | -458 | 59 | |

| Men | Total | 3,008 | 3,275 | -267 | -640 | 105 |

| Full-time | 2,487 | 2,662 | -175 | -557 | 207 | |

| Part-time | 516 | 602 | -86 | -264 | 91 | |

| Women | Total | 1,363 | 1,664 | -301* | -555 | -47 |

| Full-time | 604 | 789 | -185 | -375 | 6 | |

| Part-time | 759 | 872 | -113 | -322 | 96 | |

Download this table Table 9: Full-time and part-time self-employed workers (not seasonally adjusted)

.xls .csvIt appears that one of the main drivers for the difference in total reported self-employment between the two datasets was the results found for women. Though sampling variability cannot be ruled out for men, this still appears to be a trend across both sexes.

There are a number of potential explanations for the estimation of fewer self-employed respondents on the LMS. The LMS questionnaire has undergone substantial and thorough qualitative research across many rounds of testing (see the LMS statistical test technical report for further details) and this may be one potential factor. Differences in questionnaire specification need to analysed and considered as one part of the thorough evaluation of the effects of difference in survey design and data collection practices.

Additionally, the effects of the differences in survey design may also have been influenced by the period over which data collection took place, with those identifying as self-employed working more sporadically over the Christmas period and winter months. The field patterns used by LMS-contracted interviewers may also have led to different non-response effects than detected on the LFS, as those who are self-employed may be more difficult to contact because of non-pattern or irregular hours. However, the convenience of the online mode may have mitigated against this. As with other estimates compared in this report, further understanding of demographic differences and survey differences is needed to understand these drivers.

Back to table of contents9. Actual weekly hours worked (not seasonally adjusted)

Main findings:

Significantly fewer total hours were worked by the total workforce on the Labour Market Survey (LMS) compared with the Labour Force Survey (LFS) comparative dataset.

There are several questionnaire and operational differences that may be driving these statistical differences; this may include the use of the rolling reference week on the LMS, which was implemented to try and reduce any potential recall error, which may have occurred with the online collection mode; this design change will be evaluated alongside user needs to ensure the LMS produces reliable estimates.

Table 10 compares the estimate for the total weekly hours actually worked across Great Britain. The estimate is derived from the aggregated number of hours (including paid and unpaid overtime) that respondents reported they actually worked during the reference week.

The table shows that the Labour Market Survey (LMS) produced an estimate of 30.5 million (3.1%) fewer weekly hours worked across Great Britain when compared with the equivalent Labour Force Survey (LFS) estimate; this was a statistically significant difference. The main driver of this difference was the total weekly hours worked by men, with 24.3 million (4.1%) hours fewer reported for the LMS compared with the LFS comparative dataset (also statistically significant). Fewer weekly hours were also reported for women on LMS, but this difference was of a smaller magnitude (6.8 million, or 1.8%) and was not statistically significant.

| Statistical estimates, thousands (November 2018 to January 2019) | Statistical difference | ||||

|---|---|---|---|---|---|

| Sex | LMS dataset | LFS comparative dataset | Difference in estimates¹ | 95% Confidence interval lower bound | 95% Confidence interval upper bound |

| All | 956.6 | 987.1 | -30.5* | -57.5 | -3.6 |

| Men | 565.7 | 590.0 | -24.3* | -46.4 | -2.3 |

| Women | 390.6 | 397.4 | -6.8 | -29.6 | 16.1 |

Download this table Table 10: Total weekly hours worked (not seasonally adjusted)

.xls .csvTable 11 presents the number of hours actually worked per week as an average across the workforce. It also provides estimates for full-time and part-time workers, and those with second jobs. It shows that the fewer weekly hours reported for the LMS are driven by observed statistically significant differences in the average number of weekly hours worked by full-time employees (1.7 hours) and that this is the case for both men (1.5 hours) and women (1.9 hours).

However, these estimates also indicate that part-time workers on LMS reported working a higher number of hours per week on average when compared with the equivalent LFS dataset. The reported additional 2.4 hours worked on average by male part-time workers on LMS was statistically significant. Though women working part-time also reported higher weekly average hours for LMS, this difference was not statistically significant.

| Statistical estimates, thousands (November 2018 to January 2019) | Statistical difference | |||||

|---|---|---|---|---|---|---|

| Sex | Measure¹ | LMS dataset | LFS comparative dataset | Difference in estimates² | 95% Confidence interval lower bound | 95% Confidence interval upper bound |

| All | All workers | 30.4 | 31.3 | -0.9** | -1.5 | -0.3 |

| Full-time workers | 35.0 | 36.7 | -1.7** | -2.5 | -0.8 | |

| Part-time workers | 17.6 | 16.7 | 0.9 | -0.0 | 1.8 | |

| Second jobs | 8.8 | 8.4 | 0.4 | -1.6 | 2.4 | |

| Men | All workers | 34.2 | 35.3 | -1.1* | -2.1 | -0.1 |

| Full-time workers | 36.6 | 38.0 | -1.5** | -2.6 | -0.3 | |

| Part-time workers | 18.7 | 16.3 | 2.4* | 0.2 | 4.6 | |

| Second jobs | 11.1 | 9.1 | 2.0 | -2.1 | 6.1 | |

| Women | All workers | 26.2 | 26.8 | -0.6 | -1.5 | 0.3 |

| Full-time workers | 32.5 | 34.4 | -1.9** | -3.3 | -0.6 | |

| Part-time workers | 17.2 | 16.9 | 0.4 | -0.7 | 1.4 | |

| Second jobs | 6.7 | 7.8 | -1.0 | -3.0 | 1.0 | |

Download this table Table 11: Average actual weekly hours worked (not seasonally adjusted)

.xls .csvOverall, Tables 10 and 11 show that the total number of hours worked and average weekly hours were reported as significantly less for the LMS, and that the main drivers for this were full-time workers. Given this finding, further work is required to fully explore and understand these observed differences.

There are several questionnaire and operational differences that will be explored as part of a reflection on these statistical differences. The use of a rolling reference week, for example, on the LMS may reduce recall bias for respondents because of the shorter “lag” between response and the reporting period (for clarification on the differences in the reference week period, see the technical report). Further analysis comparing the distribution of reported hours across the two datasets will likely provide insights that can inform future LMS survey design.

Back to table of contents10. Conclusions

This report has outlined main findings from the mixed-mode test of the Labour Market Survey (LMS) covering the period between November 2018 and February 2019. An important objective of this test was to enable a comparison of several headline labour market variables derived from both the LMS and the Labour Force Survey (LFS) over the same period.

The analyses presented within provide some initial indications towards the potential statistical impact of the prototype alternative survey design for collecting and producing labour market statistics. They serve as a signpost for future research and testing as part of this ongoing research. Further analyses of this test will be conducted, alongside further research and testing, to provide a more comprehensive understanding of these results.

The findings from this test, in particular that there are no significant differences in the headline labour market estimates (Tables 3 and 4), are encouraging considering the breadth of differences in the design of the LMS and given the challenges other statistical organisations have faced when transforming surveys to include an online collection mode. Below the headline level, however, some significant differences have been highlighted when certain other LMS and LFS comparisons have been made. These include the estimates of the educational status of people aged 16 to 24 years (Tables 5, 6 and 7); self-employment (Table 9); and the average number of hours worked (Tables 10 and 11). These differences require further evaluation of the comparative survey designs of the LMS and LFS to enrich the understanding of the strengths and weaknesses of these estimates. Only through this additional research can the implications of these differences be understood and fully contextualised.

Overall, these findings are a first step towards validating the approach taken by the Office for National Statistics (ONS) towards transforming survey design to optimise for online-first, mixed-mode data collection rather than simply translating existing survey instruments and processes to new modes of collection. It must, however, be emphasised that, where no significant differences have been observed, this does not mean that in any future testing carried out over longer periods and with larger sample sizes, significant differences will not be observed. Finally, it is important to restate that these results are not official statistics relating to the labour market and they should be considered as experimental outputs from an ongoing research programme.

Back to table of contents11. Appendix A - Cohorts included in the LMS analysis data sub-set

Figure 1: Cohorts included in the Labour Market Survey analysis data sub-set

Source: Office for National Statistics – Labour Market Survey Statistical Test

Download this image Figure 1: Cohorts included in the Labour Market Survey analysis data sub-set

.png (32.6 kB)12. Appendix B - Additional downloads

List of variables and derived variables

Chart flows for LMS derived variables

Labour Market Survey flow charts

Detailed comparison tables for key statistical estimates

Educational status and labour market status for people aged from 16 to 24 (not seasonally adjusted)

Full-time, part-time and temporary workers (not seasonally adjusted)

Actual weekly hours worked (not seasonally adjusted)

LMS questionnaire example

LMS Statistical Test Questionnaire

LFS Questionnaire – LFS User Guide, Vol 2: User guide to the LFS questionnaire

Back to table of contents